Event JSON

{

"id": "7464a72efbb36f6a3274e1b0ebc8c4f0b9a06c08abc6f0246842efa0791cb04f",

"pubkey": "9e0d3a2c5bdf7161b6f248e45af480a3d5ad923984d70ca42179b525e27caab8",

"created_at": 1743729421,

"kind": 1,

"tags": [

[

"e",

"cc03791734c3dd95f295de65a9aa464c17399a39854047ce149167975375926a",

"",

"root"

],

[

"e",

"d5a1a97084cd1616aac673dbc9fe2491095a3922448f6d4cf7d9008b346a10ca"

],

[

"e",

"111833f85bde4d7c222c70f318a5748141ee40bf31a0db594f59181e447b18fc",

"",

"reply"

],

[

"p",

"1549ed4be71d1a70ea2dca4596cd0c54c61aea485c6e4e5a8ca463758c98dd4a"

],

[

"p",

"9e0d3a2c5bdf7161b6f248e45af480a3d5ad923984d70ca42179b525e27caab8"

],

[

"r",

"https://image.nostr.build/49dfda903e7786b651b2eed9185fc519429789b33b05e7f4b31d49a383f44932.jpg"

],

[

"r",

"https://stats.stackexchange.com/questions/2691/making-sense-of-principal-component-analysis-eigenvectors-eigenvalues#140579"

],

[

"r",

"https://image.nostr.build/95d9d9eea4c597c66b564cfae2656142b5147e85b7ed6ea2bb580226daabe421.jpg"

],

[

"r",

"https://image.nostr.build/571a24bb44f1d5d62be71ce3c6a86f79f2dc7122d5d933e4c5bb8fb6484d99ea.jpg"

],

[

"imeta",

"url https://image.nostr.build/49dfda903e7786b651b2eed9185fc519429789b33b05e7f4b31d49a383f44932.jpg",

"x 01fb94c2cd8dbfd724e04387e034d77f9b97e5e39802d57c6f30be3c0aad1add",

"size 212854",

"m image/jpeg",

"dim 1024x1526",

"blurhash ^ARysgxu~qxu%M%MWBRjfQayofay00ofayWB-;ay_3ofj[j[M{WB_3ofWBayIUWB%Mt7Rjayt7of?bayRjofRjayM{oft7ayj[ayxuj[j[j[ayof",

"ox 01fb94c2cd8dbfd724e04387e034d77f9b97e5e39802d57c6f30be3c0aad1add",

"alt "

],

[

"imeta",

"url https://image.nostr.build/95d9d9eea4c597c66b564cfae2656142b5147e85b7ed6ea2bb580226daabe421.jpg",

"x 60662292731a86f7059799d3a733f483a024f2093da0979c7d6651ab32cd9b0c",

"size 24269",

"m image/jpeg",

"dim 1024x398",

"blurhash QIPjcIIq~8oI%1-oNIxuofyURk$$xujYxaWYR*t7-qxtIWt7RkNHofWCj@",

"ox 60662292731a86f7059799d3a733f483a024f2093da0979c7d6651ab32cd9b0c",

"alt "

],

[

"imeta",

"url https://image.nostr.build/571a24bb44f1d5d62be71ce3c6a86f79f2dc7122d5d933e4c5bb8fb6484d99ea.jpg",

"x 75a7b3f44302d350ba9c5dd7131f0fd7f8b1a9472dcfc41fd1ea1016d1295d2c",

"size 39732",

"m image/jpeg",

"dim 1024x1097",

"blurhash {ORMrLWY^%%2NGt7ofxu~UofW?t7M|Rkt6ofVtj[NHRks.ayofs:bsWCoekCs-t6R-WCxbofWYayWCWBWVR+S1WBt6j@j?oej[j@oMofRkj]WCWCoffQxtWBt6WBfkj@axj[M}ofWBofj@a#j[jZ",

"ox 75a7b3f44302d350ba9c5dd7131f0fd7f8b1a9472dcfc41fd1ea1016d1295d2c",

"alt "

]

],

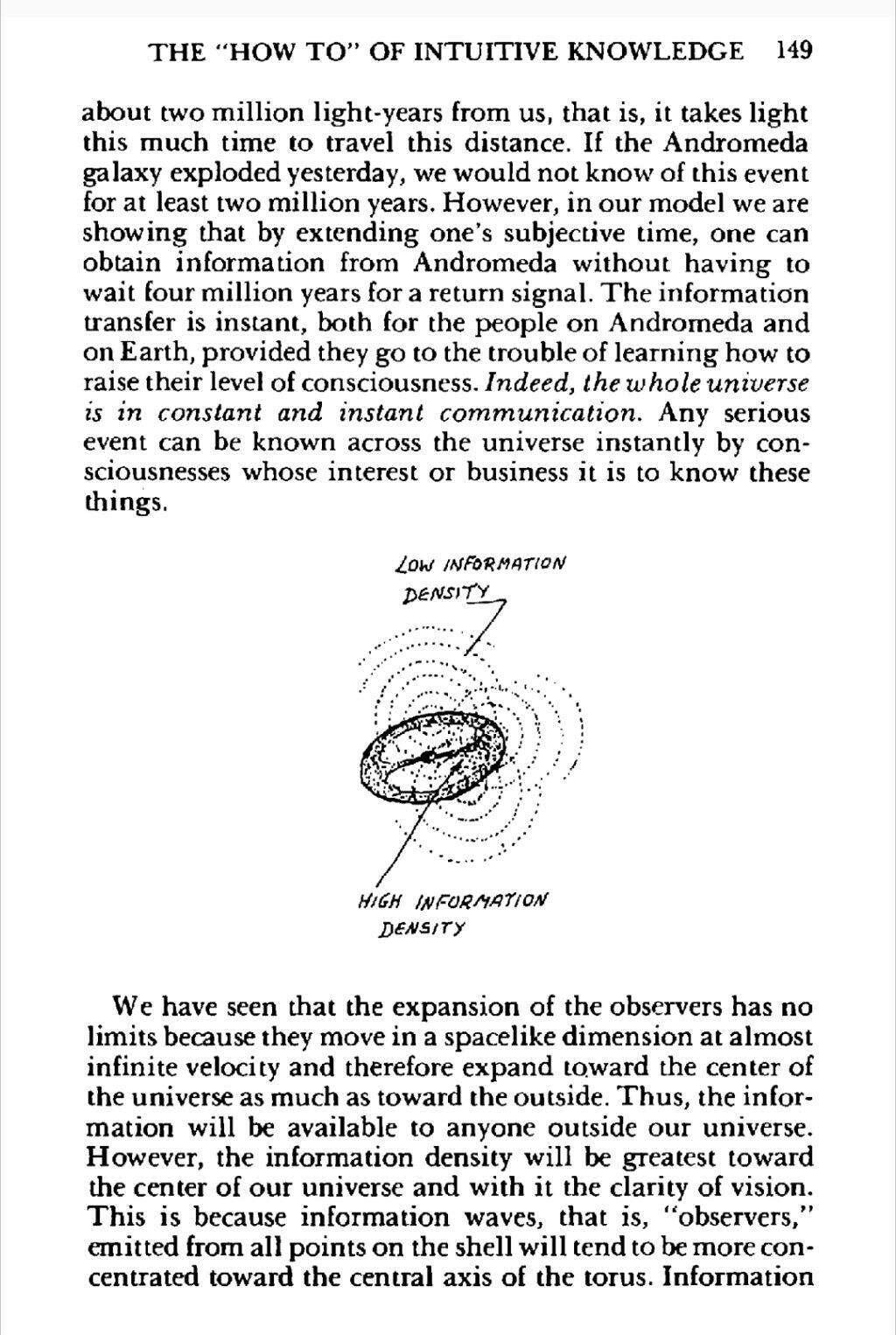

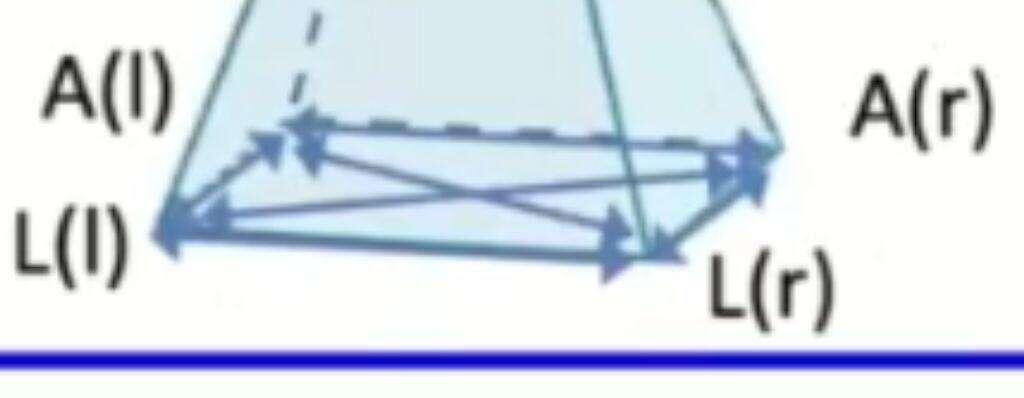

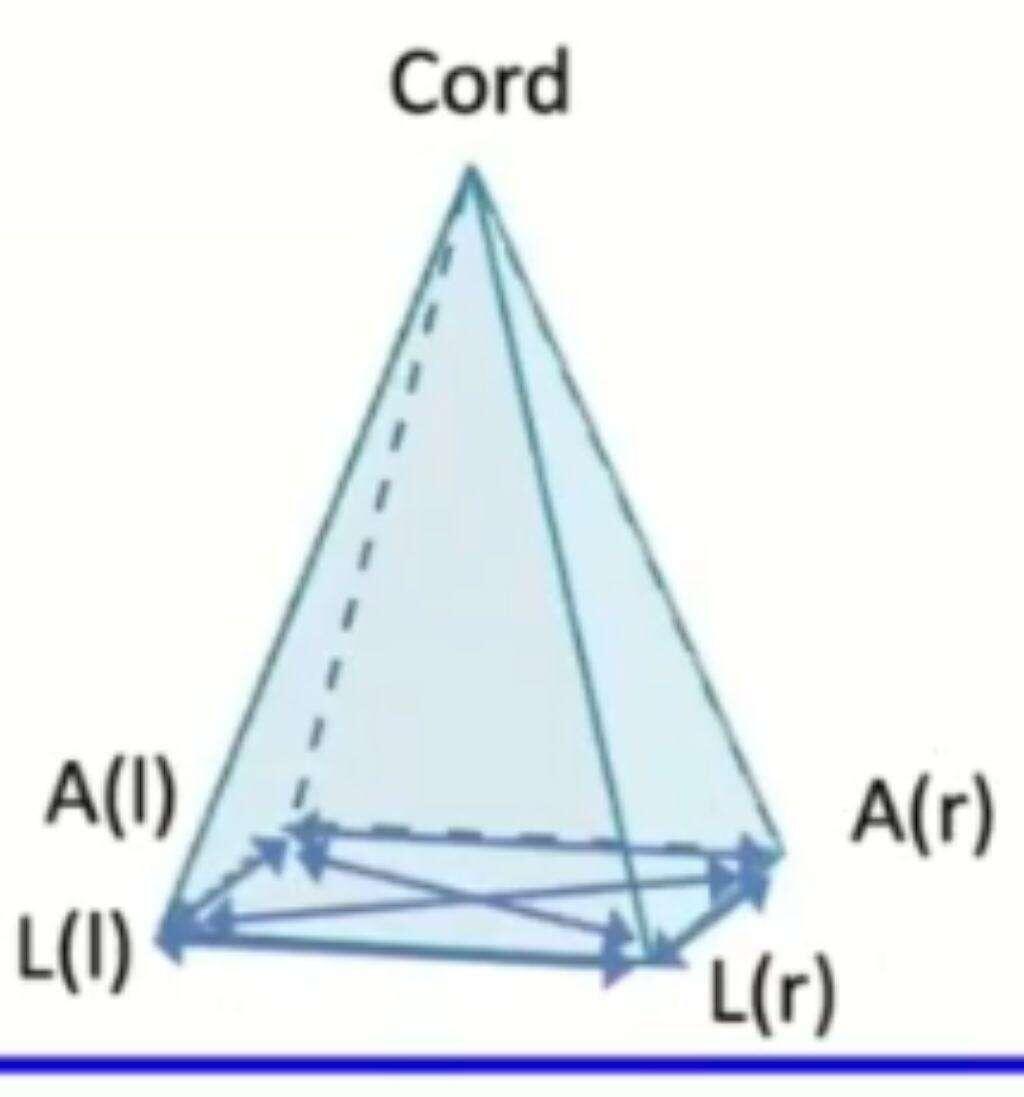

"content": "In stalking the wild pendulum, information density is talked about, e.g.\n\nhttps://image.nostr.build/49dfda903e7786b651b2eed9185fc519429789b33b05e7f4b31d49a383f44932.jpg\n\nBut also in other parts of the book.\n\n\nI just have this perspective based on my background in data science that maps the spirit to these sets of components in latent space, which are basically these components built from eigenvectors sorted by their variance or information density.\n\nThe way this works mathematically is well explained here, where the author starts out with:\n\nImagine a big family dinner where everybody starts asking you about PCA. First, you explain it to your great-grandmother; then to your grandmother; then to your mother; then to your spouse; finally, to your daughter (a mathematician). Each time the next person is less of a layman. Here is how the conversation might go.\n\nhttps://stats.stackexchange.com/questions/2691/making-sense-of-principal-component-analysis-eigenvectors-eigenvalues#140579\n\nAnd then finally in the video above the talks about correlation vs causality, which talks about movements in latent space, which is to say, given a set of components, say you had the pca algorithm generate 5 components. Now the set is sorted by information density, and say each one is represented as a slider. As you move the slider from left to right, each slider represents a move in latent space that changes the output and/or outcome, in the form of a transformation. \n\nWhen applied to a dataset of faces for example, the first slider might be the hair style, the second my be their smile, third might be their eye expression, the last one might not do anything noticeable because of its low information density meaning that it has no real impact on the output and/or outcome.\n\nWhy does this matter?\nIn the second video he talks about moving your x and nothing happens on y, which is the output and/or outcome. Let's add some detail to that.\nWe observed a doll move, and we are trying to understand how it works based on our observations. We haven't built a map yet of what makes the doll move (the latent space). \nLet's say we want a smile. We've seen an example of smiling so we know it's possible, but we can't find the dimension that applies to that. We try changing various input vectors, but nothing happens to the output and/or outcome that produces a smile. So we begin mapping out this black box, trying to change different inputs to see what happens on the output. \n\nWe write down what we learn, e.g.\n\nhttps://image.nostr.build/95d9d9eea4c597c66b564cfae2656142b5147e85b7ed6ea2bb580226daabe421.jpg\n\nWe still don't understand the coord.\n\nThen, somehow, someway, we get that ah hah moment. We discover the cord.\n\nhttps://image.nostr.build/571a24bb44f1d5d62be71ce3c6a86f79f2dc7122d5d933e4c5bb8fb6484d99ea.jpg\n\nIt was in another dimension, a higher dimension.\n\nThis is what it feels like to me learning about your work. You have found the cord!",

"sig": "dc401be6ff06548bcc2d0f45bc0741c42cd3fc9cee26f01b73afb1ca688925435746521e1321fea7fa62a8784e2101bbb7bf7a073cfd7717e203d884b5588fc2"

}